Opening

Anthropic signed a 300MW compute deal with SpaceX and doubled Claude Code rate limits in the same week it shipped vertical agent frameworks for financial services. These are not separate announcements. They are the same move: Anthropic is eliminating the infrastructure objection at the same time it hands operators the workflows to run on top of it. The labs that win the next two years will not win on model quality alone. They will win by removing every excuse not to build.

Today's Signals

Latent Space reports Anthropic’s ARR growth is running at 8,000% annualized. The SpaceX partnership adds 300MW of dedicated compute capacity, and Claude Code rate limits are doubling as a direct result. This is not a benchmark announcement. It is a capacity announcement. (latent.space)

Anthropic formally announced expanded compute and increased API and Claude Code usage limits tied to the SpaceX infrastructure deal. The increased limits take effect across Pro, Team, and Enterprise tiers. (anthropic.com/news)

Anthropic and Blackstone/Goldman Sachs launched a $1.5B enterprise services venture. OpenAI simultaneously announced a $4B Deployment Company. Both are betting that wiring agents into enterprise production systems is the next revenue layer, not the model itself. (latent.space)

Simon Willison explores the narrowing gap between casual AI-assisted coding and production agentic engineering. His argument: the two disciplines are converging faster than most teams have planned for. Worth reading before your next internal estimate. (simonwillison.net)

Anthropic released an agent framework targeting financial services workflows. It is the first vertical-specific agent toolkit from a frontier lab, not a general-purpose API. The framework includes pre-built templates for common financial operations. (anthropic.com/news)

What's Moving

- [Tool] design-extract — Extract any website’s complete design system with one command. The tool pulls design tokens, typography scale, spacing, color palettes, and component patterns, then emits them to Figma tokens, Tailwind config, or CSS custom properties depending on what you need. It also ships an MCP server so an agent can query a design system mid-build without leaving the session. For anyone reverse-engineering a client’s brand before a rebuild, this replaces two to three hours of manual inspection. 2,284 stars. (github.com/Manavarya09/design-extract)

Partner

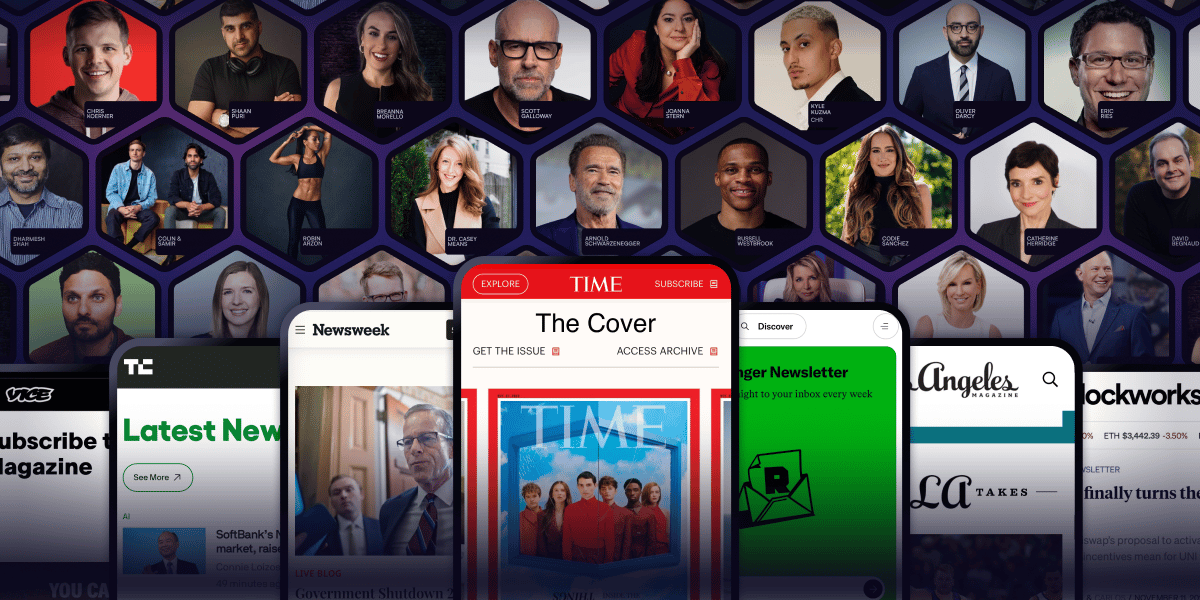

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.

The Frame

The complaint a year ago was: "Claude Code is interesting but the rate limits make it unusable in production." Anthropic just doubled capacity. Before that the complaint was: "Agents are impressive demos but there is no enterprise framework." Anthropic shipped vertical templates for financial services. Before that: "The context costs make long-running agents uneconomical." mcp2cli just cut per-call overhead by 98%.

Every objection is getting addressed. Not randomly, and not slowly. The SpaceX deal matters because compute constraints were the last credible structural argument for waiting. If you could not sustain a production agent workflow at scale, you had a real reason to hold off building one. That reason is now gone. Anthropic is not adding capacity to handle existing demand. It is adding capacity to remove the ceiling before the demand arrives.

The services ventures from Anthropic and OpenAI follow the same logic. Selling API access is a thin-margin business when four labs sell equivalent access. The margin is in deployment: the configuration work, the integration depth, the production hardening that no API call handles on its own. Both labs are racing to be inside the enterprise stack before the integration layer gets commoditized too. The operators who will look back at 2026 as the year they built their lead are not waiting for the infrastructure to mature further. They are building now, on the capacity that exists today, against the frameworks that shipped this week. The SpaceX deal does not change what Claude can do. It changes how many people can do it at the same time.

On the Radar

[Repo] strukto-ai/mirage — A unified virtual filesystem layer for AI agents. Mirage gives agents a sandboxed file abstraction so they can read, write, and organize without touching the host disk. If you have been duct-taping temp directories for multi-step agent workflows, this is the clean answer. 738 stars since May 6. (github.com/strukto-ai/mirage)

[Repo] mcp2cli — Wraps any CLI tool as an MCP server and cuts per-call context overhead by 98%. Native MCP tool definitions consume 50 to 100 tokens per call; mcp2cli compresses that to 1 to 4. For long-running agent sessions burning through context windows on tool scaffolding, this is a direct cost reduction. Gained traction on HN this week.

[Skill] MCP-Shield — Security auditing tool for MCP servers. Scans for permission misconfigurations, data exposure vectors, and server-level gaps. As more operators wire production systems through MCP, auditing what agents can access stops being theoretical.

The Onboard

The two-prompt pattern separates architecture from implementation, and it prevents the most common Claude Code failure mode: a plausible-looking build that has no coherent structure underneath it.

Before asking Claude to build anything, run the planning prompt first. "Design the data model, API routes, and component tree for X. Do not write any implementation code." Read the plan. Push back on anything that looks wrong. Then run the build prompt separately: "Implement the plan above."

The planning step forces structural decisions into the open before code exists. Without it, Claude makes those decisions implicitly during implementation, and you do not see them until something breaks. The explicit separation costs one extra prompt. It saves the refactor you would have done on day three.

(Pattern surfaced via r/ClaudeAI, 976 upvotes)

Builder's Brief

Wedding Vendor Inquiry Triage

Wedding photographers and florists spend peak season answering the same inquiry 40 times a month. Same questions, same format, same decision to make: is this lead worth a phone call? The business is straightforward: read the inquiry, draft a reply in the vendor's voice, sort the inbox by who is worth calling back.

The stack is three components. An IMAP poller (or a webhook from HoneyBook or a contact form) feeds incoming inquiries to a Claude agent. The agent extracts date, budget, and a handful of vibe signals, scores leads on a 1-to-3 scale, and drafts a personalized reply using five example emails from the vendor as voice training. A simple dashboard shows the inbox sorted by score. The vendor clicks send or edits first.

Build this in a day with Claude Code. SQLite for storage, SMTP for sending, a lightweight Express server for the dashboard. No external services required at the MVP stage.

Pricing: $79 per month, or $20 per inquiry batch for vendors who prefer seasonal billing. Seasonal pricing converts better in a seasonal business. Find the first beta tester in a wedding photographer Facebook group with a 30-second screen recording of the dashboard. Let peak season do the selling.

One thing that can kill the MVP scope: Instagram DMs have no clean API. Keep the first version to email and contact form inbounds only.

Before You Go

Anthropic is solving compute. mcp2cli is solving context cost. Vertical frameworks are solving workflow. If tooling, infrastructure, and integration templates are all being addressed in the same quarter, what is the actual remaining barrier to shipping an agent-based business?

Forward this to a builder who needs it.